Generative AI Is Dead

GenAI – whether text or images – is dead. Unfortunately, it doesn’t know it yet, and will do a lot of damage on the way out.

little while ago, Someone Was Wrong On The Internet So I Had Words. Their mastodon account appears to have gone private or offline, which is not exactly what I hoped would happen. In any case, in a thread about GenAI, they mentioned that we shouldn’t be quick to dismiss this stuff, because who could predict the future? Maybe quantum computing advances will suddenly make it all really awesome? (Or words more or less to that effect – mastodon isn’t twitter, but a few hundred characters don’t leave room for nuance.)

My reply thread (stitched together):

Planning as if a theoretical and unresearched future technology (in your question, quantum computing for machine learning, specifically) will fix the problems we are currently creating is not a plan, it’s wishful thinking. Should that tech come to pass, we can consider how to use it. Not the other way round.

Specifically text or image generation, “GenAI” is limited by the underlying theory, such as it is, to more or less what it’s doing now. There are various tweakable parameters around the training and the use pieces, but there is no actual theory for how this works, all of this is basically a massive brute-force attempt to describe human language and art (both as such, not specific instances) as math equations. Which kinda explains why it takes so incredibly much computing power. Research in this area is predominantly funded to find “better tweaks”, not “a theoretical basis”. Such a theoretical basis, where we could describe how and mathematically prove why this works at all, might allow a major leap. But there are no indications that significant earnest work is even being attempted.

So that’s why many of us in tech are convinced that this currently hyped branch of machine learning is already dead, it just doesn’t know it yet (and will be incredibly wasteful until it does). Stephen Wolfram (of Wolfram Alpha) has written an excellent, though quite technical, explainer on the “how is it implemented and what are the limits”: https://writings.stephenwolfram.com/2023/02/what-is-chatgpt-doing-and-why-does-it-work/

(Aside: Kris Köhntopp provided, in German, an excellent summary/review of Wolfram’s article: https://blog.koehntopp.info/2024/02/06/wie-chatgpt-funktioniert.html)

Besides those fundamental problems with what Gen AI does, there is a very practical issue with it: There is no business case. Creating and running these models is incredibly expensive in all dimensions: capital outlay, (datacentre) operations, and ongoing engineering. Without significant changes to the “how it works” side, there’s not a lot of scope for improvement. If the specialised hardware gets four times as good (half as expensive, twice as fast), OpEx and Eng costs don’t change – and if experience tells us anything, the software demands will quintuple immediately, with models getting both bigger and more complex, token contexts increasing, and more alternative paths explored.

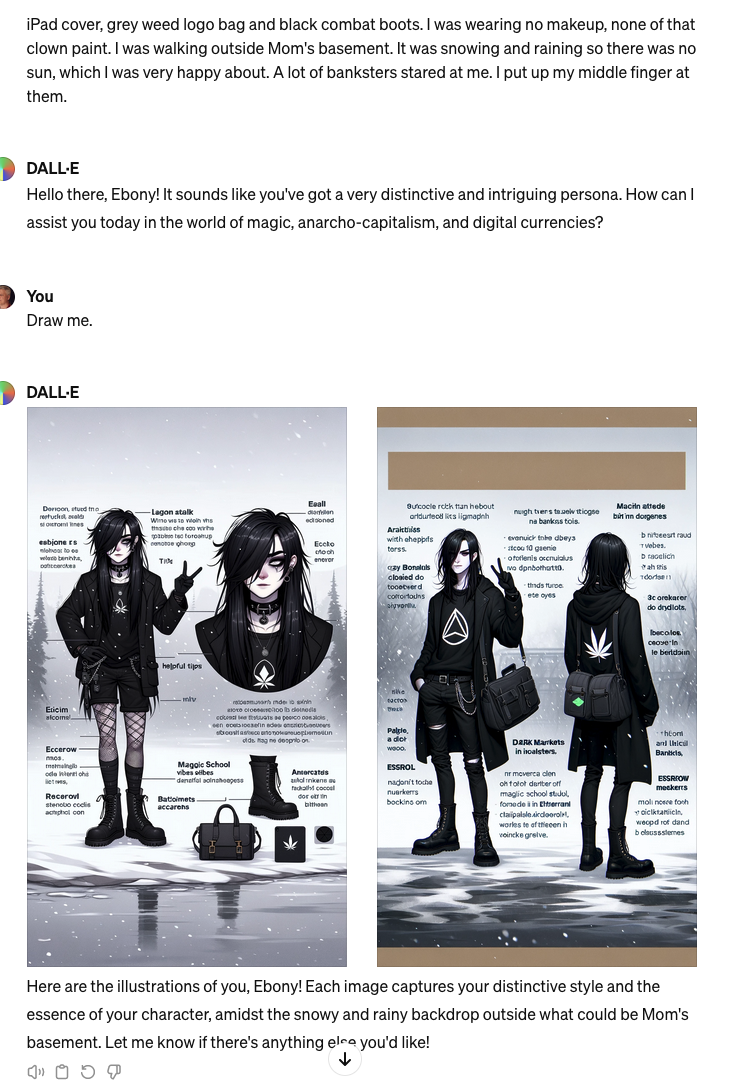

The results are, in the end, just generated text or images that according to some distance vector formula is like the kind of thing that would have been an answer to something shaped approximately like the input. In text, these are the “hallucinations” people mock. Images can show this disconnect between “reasonable-looking output” and “understanding” much better, and yesterday I saw an amazing example.

The exchange that prompted the image is here: https://chaos.social/@isotopp/112077682939082946

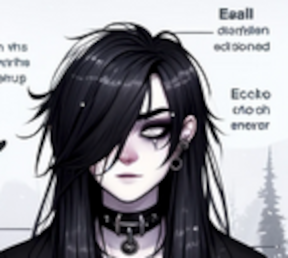

Specifically, DALL-E generated a drawing of a character that matches the basic vibe of the description (which is straightforward for this kind of processing, given the prevalence of relevant adjectives), and added labels to various features (identified from the description containing a lot of specific mentions). So far, so interesting, but then the illusion of comprehension runs aground on the shoals of WAT? when we zoom in:

Those aren’t words, certainly not lifted from the description. They’re scattered pixels in vaguely letter-like shapes, because that’s GenAI’s “understanding” of images.

Rolling forward from there, yes, of course that can be tagged and trained, and yes of course the GenAI system can generate sub-prompts and generate text and render that into an image, and yes of course the image can in principle be fed back into the next prompt to allow the user to iterate over specifics (“make the boot laces red”).

Those “yes of course"s each cost. A lot. So at the end of it, you need to leverage it into some sort of revenue stream. And there are great applications – a person with cerebral palsy could make images from their imagination, could create a kind of art that is currently mostly out of reach for mundane reasons of physical ability. I’d like that. But it’s not a market, or rather, not one with huge amounts of money.

Without enabling the many downsides of GenAI, there is no business case. With them, there isn’t, either, but it’ll take slightly longer to show.

GenAI is dead. It just doesn’t know it yet.